02.04.2026

©AI-generated

How AI Avatars Shape Perceived Fairness

MCML Research Insight – With Ka Hei Carrie Lau and Enkelejda Kasneci

Imagine applying for a job and being interviewed not by a human recruiter, but by an AI avatar on your screen. The conversation feels surprisingly natural. The interviewer smiles, asks questions, and responds to your answers in real time. But after the interview, the system rejects your application. Would it matter what the AI interviewer looked like?

Key Insight

Even when an AI system makes the decision, the appearance of the interviewer can shape how fair that decision feels.

MCML Junior Member Ka Hei Carrie Lau and MCML PI Enkelejda Kasneci together with collaborators Efe Bozkir and Philipp Stark explored exactly this question in their study “Skin-Deep Bias: How Avatar Appearances Shape Perceptions of AI Hiring.” The paper was accepted at CHI 2026 and received an Honourable Mention Award. It investigates how the race and gender of AI avatars influence people’s perceptions of fairness, bias, and trust during automated job interviews.

Key Question

Do visual identity cues in AI avatars influence how people judge the fairness of automated decisions?

Key Question

Do visual identity cues in AI avatars influence how people judge the fairness of automated decisions?

The Idea

AI systems are increasingly used in recruitment, from screening résumés to conducting automated interviews. These tools are often presented as objective decision-makers that could reduce human bias. Yet when AI interacts with people through human-like avatars, the interaction becomes social. Even if users know they are speaking to a machine, they still interpret the avatar through familiar social cues such as identity, trust, and fairness.

This raises an important question: Do people judge AI decisions differently depending on what the AI interviewer looks like? Understanding this matters because perceptions of fairness strongly influence whether people trust and accept AI systems in high-stakes contexts like hiring.

Core Idea

People respond to AI interviewers not only as algorithms — but as social actors.

Simulating an AI Job Interview

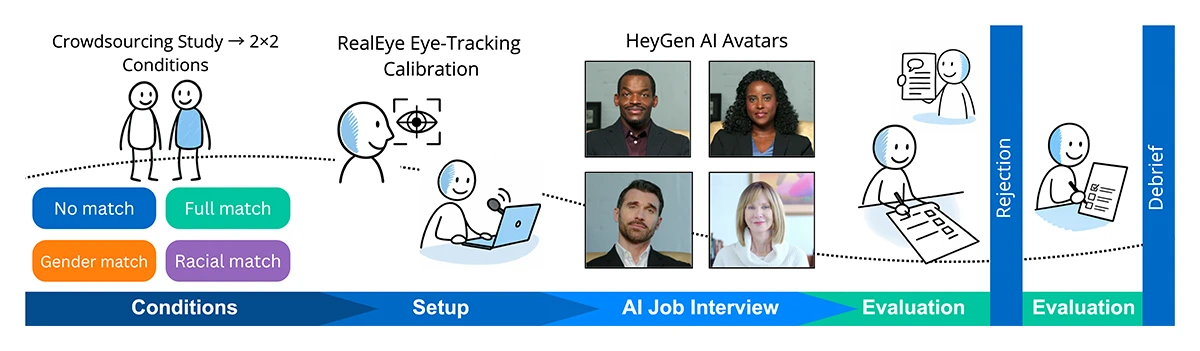

To study this question, the researchers created a realistic AI-powered interview platform. Participants joined an online interview where a photorealistic AI avatar asked job-related questions and responded dynamically during the conversation. The interview lasted about three minutes and closely resembled modern automated recruitment tools.

The key difference between participants was the appearance of the avatar interviewer. The study systematically varied two characteristics:

- Race (Black or White avatars)

- Gender (male or female avatars)

Participants were randomly assigned to one of four conditions depending on whether the avatar matched or differed from their own identity. After the interview, every participant received the same outcome: a rejection. Researchers then measured how participants perceived the system — including fairness, trust, and perceived bias.

©Lau et al.

Figure 1: Study overview. We recruited participants via crowdsourcing and assigned them to a 2×2 experimental design with four avatar–participant match conditions (No Match, Gender Match, Racial Match, Full Match). The experiment procedure included eye-tracking calibration, a real-time verbal AI interview, post-interview measures, a scripted rejection, post-outcome measures, and a debrief. We created some illustrative icons with GPT-5 and then the author edited them to align with the study design and visual style.

What We Learn

Even subtle design choices such as avatar appearance can shape how people interpret the fairness of AI decisions.

What We Learn

Even subtle design choices such as avatar appearance can shape how people interpret the fairness of AI decisions.

Key Findings

The study revealed that avatar identity cues influence how people interpret AI decisions.

- Racial mismatch increased perceptions of bias. When the avatar’s race differed from the participant’s own, participants were more likely to attribute the rejection to bias.

- Partial matches produced the lowest fairness ratings. Avatars that matched participants on only one characteristic (for example gender but not race) were perceived as less fair than both full matches and complete mismatches.

- Identity cues attracted attention. Eye-tracking data showed that participants looked more closely at the avatar’s face when racial identities differed.

Takeaway

In high-stakes settings like hiring, fairness is shaped not only by algorithms but also by how people experience AI systems.

Why This Matters

As AI systems become part of real hiring processes, discussions about fairness often focus on the algorithms themselves. This research highlights another dimension: perceived fairness. Even if an AI system behaves objectively, the design of its interface — including the appearance of avatars — can shape how people interpret decisions. For designers and organizations deploying AI tools, this means fairness is not only a technical challenge but also a question of human experience and interaction design.

Further Reading & Reference

If you would like to learn more about how avatar identity cues influence perceptions of fairness in AI-mediated hiring, you can explore the full paper accepted at CHI 2026 (Conference on Human Factors in Computing Systems) — one of the leading international conferences in human–computer interaction.

Skin-Deep Bias: How Avatar Appearances Shape Perceptions of AI Hiring.

CHI 2026 - ACM CHI Conference on Human Factors in Computing Systems. Barcelona, Spain, Apr 13-17, 2026. DOI

Share Your Research!

Get in touch with us!

Are you an MCML Junior Member and interested in showcasing your research on our blog?

We’re happy to feature your work—get in touch with us to present your paper.

Related

28.05.2026

Zeynep Akata: To Trust AI, We Need to Understand What Goes on Behind the Scenes

MCML PI Zeynep Akata explains that to trust AI, we must understand its inner workings, address foundation model bias, and make explainability central.