26.07.2023

MCML at UAI 2023: Three Accepted Papers

39th Conference on Uncertainty in Artificial Intelligence (UAI 2023). Pittsburgh, PA, USA, 31.07.2023–04.08.2023

We are happy to announce that MCML researchers have contributed a total of 3 papers to UAI 2023. Congrats to our researchers!

Main Track (3 papers)

Approximately Bayes-optimal pseudo-label selection.

UAI 2023 - 39th Conference on Uncertainty in Artificial Intelligence. Pittsburgh, PA, USA, Jul 31-Aug 03, 2023. URL

Abstract

Semi-supervised learning by self-training heavily relies on pseudo-label selection (PLS). This selection often depends on the initial model fit on labeled data. Early overfitting might thus be propagated to the final model by selecting instances with overconfident but erroneous predictions, often referred to as confirmation bias. This paper introduces BPLS, a Bayesian framework for PLS that aims to mitigate this issue. At its core lies a criterion for selecting instances to label: an analytical approximation of the posterior predictive of pseudo-samples. We derive this selection criterion by proving Bayes-optimality of the posterior predictive of pseudo-samples. We further overcome computational hurdles by approximating the criterion analytically. Its relation to the marginal likelihood allows us to come up with an approximation based on Laplace’s method and the Gaussian integral. We empirically assess BPLS on simulated and real-world data. When faced with high-dimensional data prone to overfitting, BPLS outperforms traditional PLS methods.

MCML Authors

Jann Goschenhofer

Dr.

* Former Member

Emilio Dorigatti

Dr.

* Former Member

Is the Volume of a Credal Set a Good Measure for Epistemic Uncertainty?

UAI 2023 - 39th Conference on Uncertainty in Artificial Intelligence. Pittsburgh, PA, USA, Jul 31-Aug 03, 2023. URL

Abstract

Adequate uncertainty representation and quantification have become imperative in various scientific disciplines, especially in machine learning and artificial intelligence. As an alternative to representing uncertainty via one single probability measure, we consider credal sets (convex sets of probability measures). The geometric representation of credal sets as d-dimensional polytopes implies a geometric intuition about (epistemic) uncertainty. In this paper, we show that the volume of the geometric representation of a credal set is a meaningful measure of epistemic uncertainty in the case of binary classification, but less so for multi-class classification. Our theoretical findings highlight the crucial role of specifying and employing uncertainty measures in machine learning in an appropriate way, and for being aware of possible pitfalls.

MCML Authors

Quantifying Aleatoric and Epistemic Uncertainty in Machine Learning: Are Conditional Entropy and Mutual Information Appropriate Measures?

UAI 2023 - 39th Conference on Uncertainty in Artificial Intelligence. Pittsburgh, PA, USA, Jul 31-Aug 03, 2023. URL

Abstract

The quantification of aleatoric and epistemic uncertainty in terms of conditional entropy and mutual information, respectively, has recently become quite common in machine learning. While the properties of these measures, which are rooted in information theory, seem appealing at first glance, we identify various incoherencies that call their appropriateness into question. In addition to the measures themselves, we critically discuss the idea of an additive decomposition of total uncertainty into its aleatoric and epistemic constituents. Experiments across different computer vision tasks support our theoretical findings and raise concerns about current practice in uncertainty quantification.

MCML Authors

#research #top-tier-work #bischl #huellermeier #nagler

Related

09.10.2025

Rethinking AI in Public Institutions - Balancing Prediction and Capacity

Unai Fischer Abaigar explores how AI can make public decisions fairer, smarter, and more effective.

08.10.2025

MCML-LAMARR Workshop at University of Bonn

MCML and Lamarr researchers met in Bonn to exchange ideas on NLP, LLM finetuning, and AI ethics.

08.10.2025

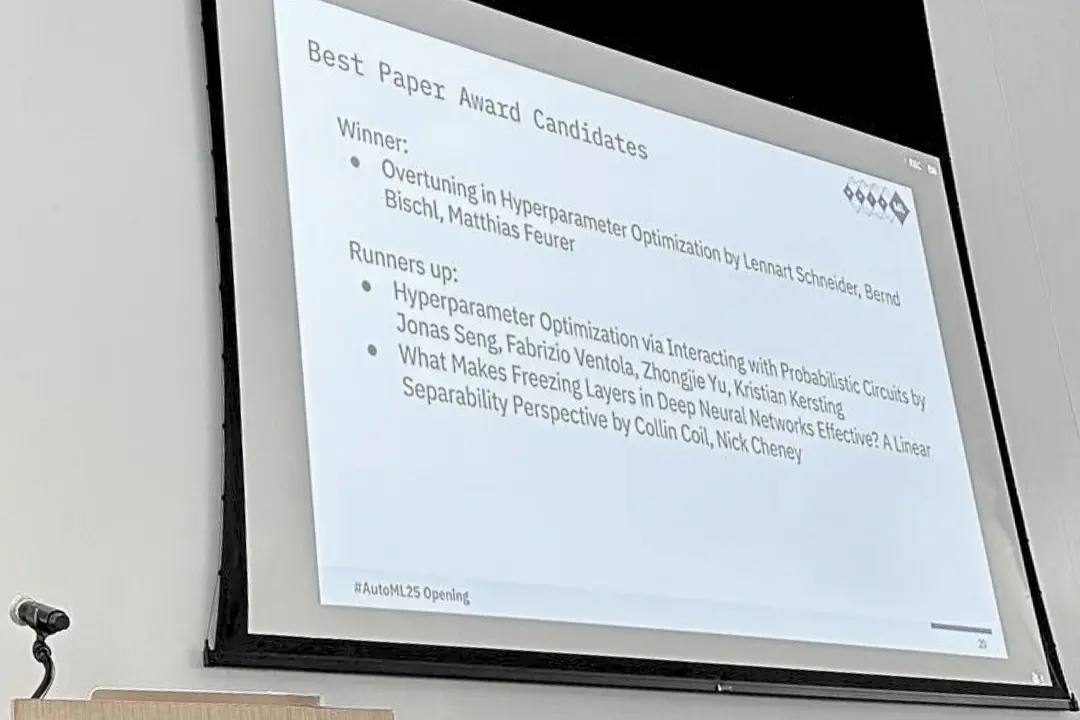

Three MCML Members Win Best Paper Award at AutoML 2025

MCML PI Matthias Feurer and Director Bernd Bischl’s paper on overtuning won Best Paper at AutoML 2025, offering insights for robust HPO.

29.09.2025

Machine Learning for Climate Action - With Researcher Kerstin Forster

Kerstin Forster researches how AI can cut emissions, boost renewable energy, and drive corporate sustainability.