05.03.2026

Foundations of Diffusion: One Map for Images and Text

MCML Research Insight – With Vincent Pauline, Tobias Höppe, Andrea Dittadi, and Stefan Bauer

From hyper-realistic video generation to protein design, Diffusion Models are the engine behind the current wave of Generative AI. But if you try to understand how diffusion models actually work, you quickly run into heavy math. Most explanations focus only on images. If you’re working with text, biology, or graphs, you’re often left alone with scattered papers and technical jargon.

«Diffusion models are central to generative AI, yet most introductions only cover Euclidean data and seldom clarify their connection to discrete-state analogues.»

Andrea Dittadi

MCML Junior Member

This is where the MCML authors Vincent Pauline, Tobias Höppe, Andrea Dittadi, and Stefan Bauer, along with collaborators Kirill Neklyudov, Alexander Tong, step in. In their paper the authors ask: Can we explain all diffusion models — for images and text — in one clean, unified way?

Problem Statement: Two Worlds, One Theory?

Diffusion models are often taught in pieces. The theory around it is fragmented into:

- The Euclidean Bias: Most introductions explain the image case, because images live in smooth, continuous space and the math is well-established.

- The Discrete Gap: For text, graphs, or biological sequences, the explanations are scattered across different papers and use different notation. As a result, learners often bounce between beginner-friendly blogs and very technical textbooks and struggle to see that the same core idea connects both.

This creates a “knowledge silo” where researchers expert in image generation struggle to translate their intuition to sequence modeling, and newcomers are overwhelmed by the mathematical terminology.

©Pauline et al.

Highlights: The Visual “Cheat Code” for Diffusion

A big reason this handbook is easier to follow is how it is written. The authors show us that continuous and discrete diffusion match.

1. Parallel Presentation

Key ideas are presented side-by-side so you can instantly see what corresponds to what:

🔵 Blue boxes: the “image version” (continuous space)

🔴 Red boxes: the “text/sequence version” (discrete space)

🟡 Yellow boxes: ideas that apply to both

This means we can read the paper like a guidebook: follow everything end-to-end, or focus on the parts that match our background.

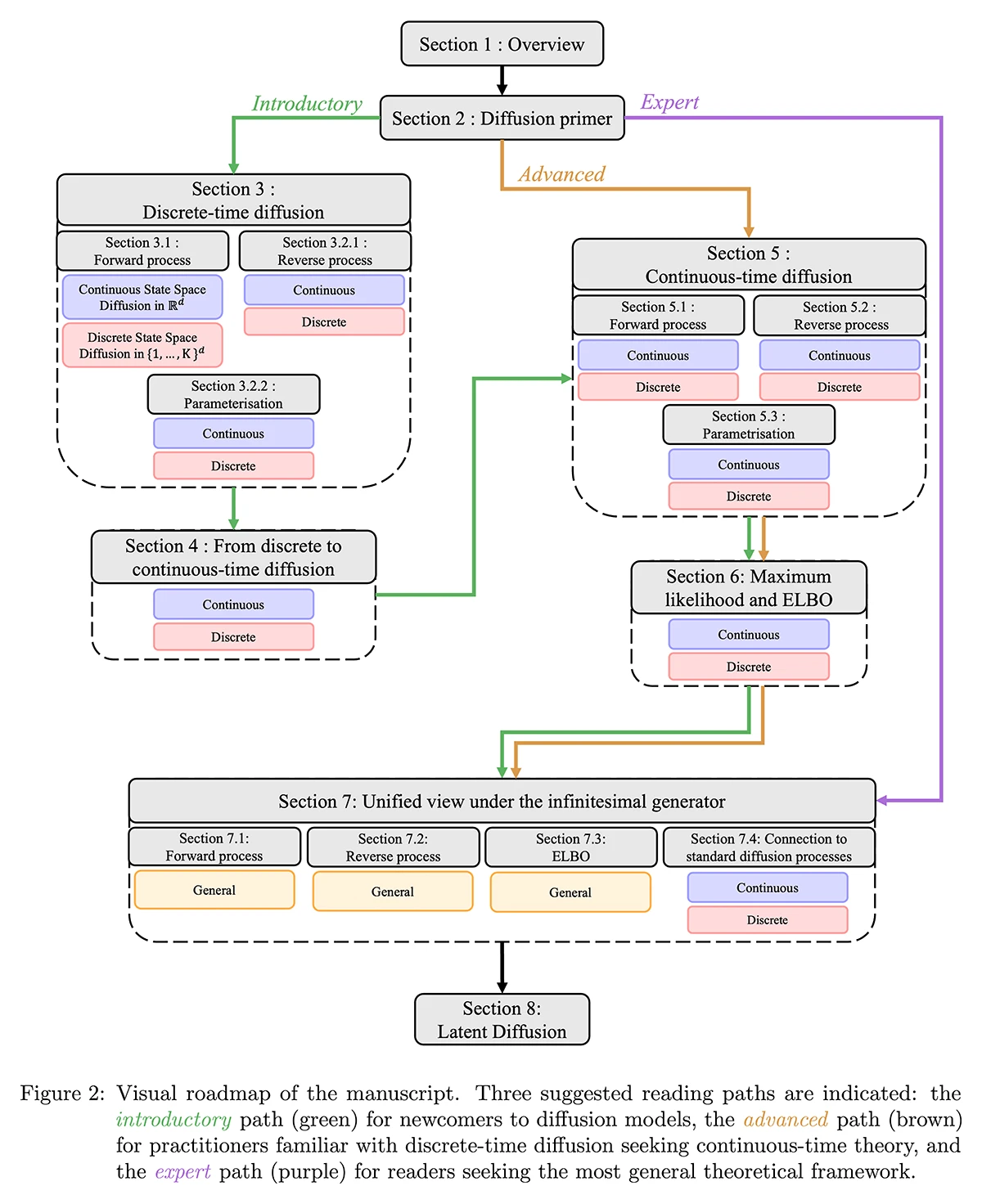

2. A Roadmap for Every Reader

The paper is structured so different readers can jump in at the right depth:

🟢 Introductory: A “Diffusion Primer” that builds intuition on why variational inference works, leading into the discrete-time formulation and training objectives without needing heavy prerequisites.

🟠 Advanced: Already know DDPMs? Jump straight to continuous-time theory: SDEs, CTMCs, Fokker-Planck & master equations.

🟣 Expert: presents the most general “all-in-one” framework for diffusion across different data types.

©Pauline et al.

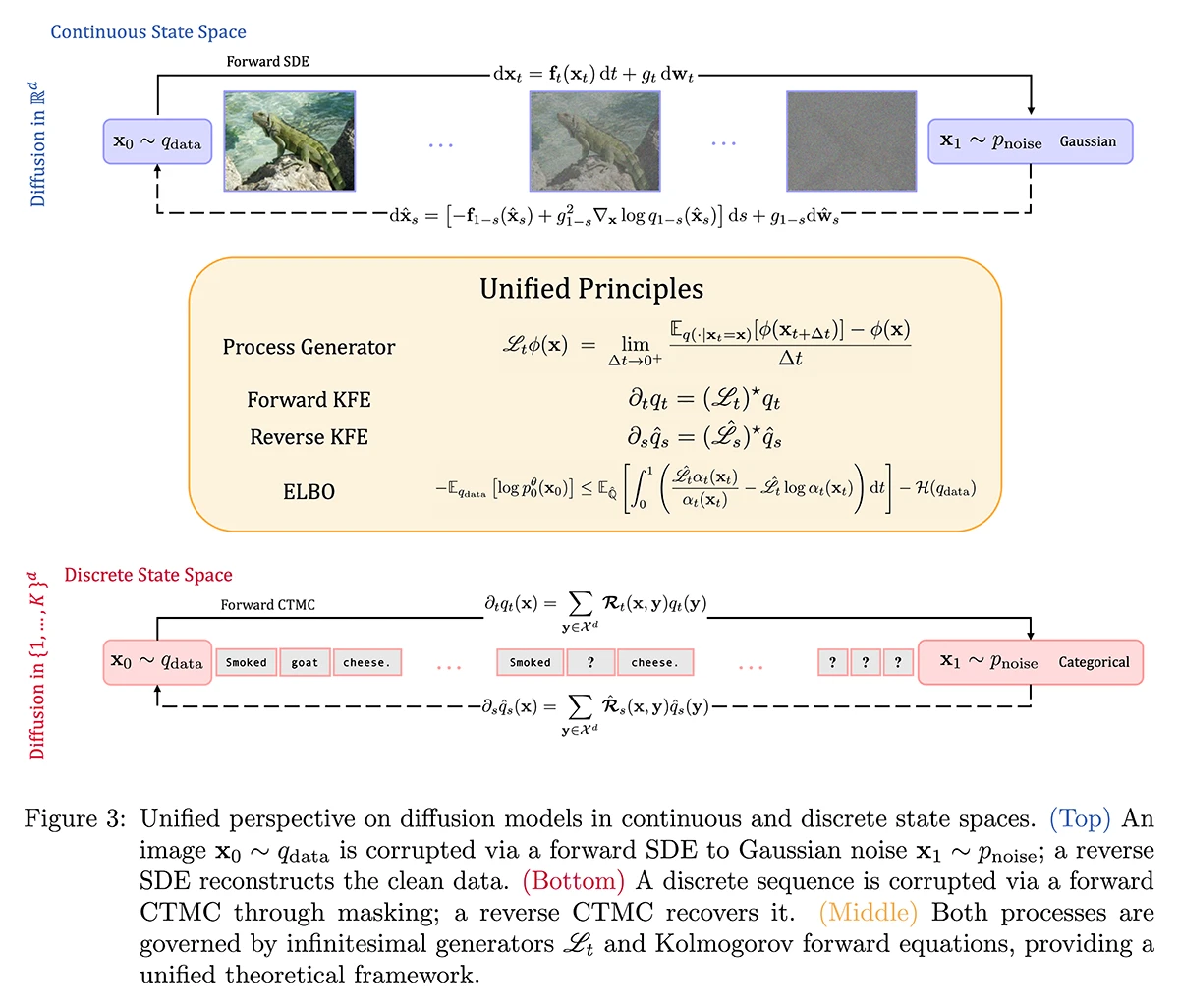

3. The Unifying Lens: The Infinitesimal Generator

At the heart of the paper (Section 7) lies the mathematical unification. The authors utilize the Markov process infinitesimal generator to show that continuous diffusion for images and discrete diffusion for text are just special cases of the same formalism.

Instead of two separate theories, we get:

- one way to describe how data gets corrupted over time,

- one way to describe how to reverse that corruption,

- and one common training view (through an ELBO-style objective) that works across settings.

Why This Matters

By providing a single, coherent story that connects VAEs, score-based models, latent diffusion, and flow matching, this work lowers the barrier to entry for discrete generative modeling. It empowers researchers to port the same techniques from the image-generation domain directly to discrete problems like language modeling and biology.

©Pauline et al.

Further Reading & Reference

Interested in mastering the math of diffusion?

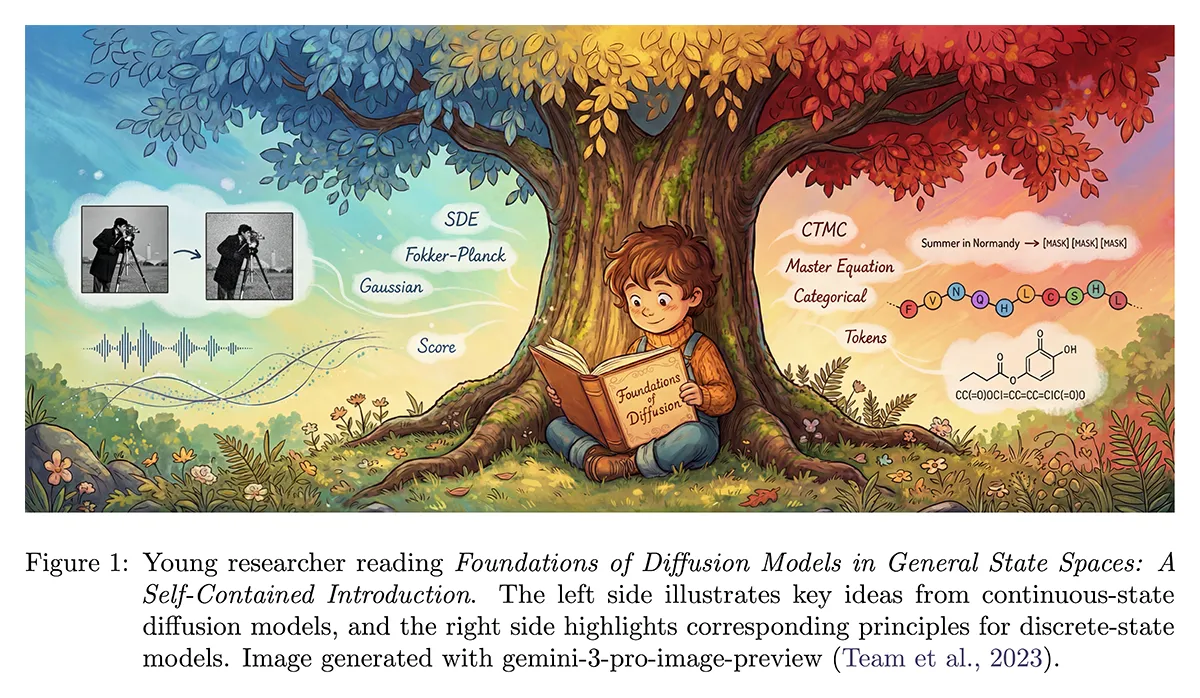

Foundations of Diffusion Models in General State Spaces: A Self-Contained Introduction.

Preprint (Dec. 2025). arXiv

Share Your Research!

Get in touch with us!

Are you an MCML Junior Member and interested in showcasing your research on our blog?

We’re happy to feature your work—get in touch with us to present your paper.

@Vincent Pauline

Related

21.05.2026

Björn Eskofier Featured in Heise Online

Björn Eskofier participated in the panel discussion “How Research Scientists Build Health AI” at the Digital Health Innovation Forum.

11.05.2026

Cordelia Schmid Featured in Süddeutsche Zeitung

Cordelia Schmid, a member of the MCML Advisory Board, was recently featured in Süddeutsche Zeitung for her work in computer vision and robotics.

11.05.2026

Research Stay at Imperial College London

Jun Li joined a research stay at Imperial College London via MCML AI-X, exploring medical AI, multimodal models, and uncertainty.