26.10.2025

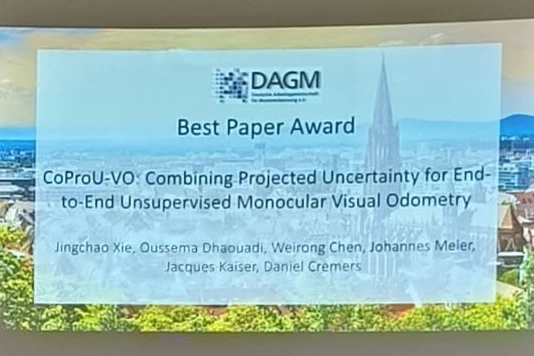

CoProU-VO Wins GCPR 2025 Best Paper Award

Award-Winning Work by MCML Director Daniel Cremers and His Team for Advances in Unsupervised Visual Odometry

The paper “CoProU-VO: Combining Projected Uncertainty for End-to-End Unsupervised Monocular Visual Odometry” by MCML Director Daniel Cremers and Junior Members Weirong Chen and Johannes Meier, together with Jingchao Xie, Oussema Dhaouadi, and Jacques Kaiser received the Best Paper Award at GCPR 2025.

Their work presents CoProU-VO, an end-to-end unsupervised visual odometry framework that propagates and combines uncertainty across temporal frames to improve robustness in dynamic scenes. Built on vision transformer backbones, it jointly learns depth, uncertainty, and camera poses, achieving state-of-the-art performance on KITTI and nuScenes datasets.

Congratulations to the team on this outstanding achievement!

Check out the full paper:

CoProU-VO: Combining Projected Uncertainty for End-to-End Unsupervised Monocular Visual Odometry.

GCPR 2025 - German Conference on Pattern Recognition. Freiburg, Germany, Oct 23-26, 2025. Best Paper Award. DOI

Related

21.05.2026

Björn Eskofier Featured in Heise Online

Björn Eskofier participated in the panel discussion “How Research Scientists Build Health AI” at the Digital Health Innovation Forum.

11.05.2026

Cordelia Schmid Featured in Süddeutsche Zeitung

Cordelia Schmid, a member of the MCML Advisory Board, was recently featured in Süddeutsche Zeitung for her work in computer vision and robotics.

08.05.2026

Right Answer, Wrong Reasoning - Is AI Thinking or Cheating?

Can AI cheat without us noticing? Our PI Barbara Plank and her team introduce a new detection method at ICLR 2026.