27.02.2025

ChatGPT in Radiology: Making Medical Reports Patient-Friendly?

MCML Research Insight - With Katharina Jeblick, Balthasar Schachtner, Jakob Dexl, Andreas Mittermeier, Anna Theresa Stüber and Philipp Wesp and MCML PI Michael Ingrisch

Medical reports, especially in radiology, are commonly difficult for patients to understand. Filled with complex terminology and specialized jargon, these reports are primarily written for medical professionals, often leaving patients struggling to make sense of their diagnoses. But what if AI could help? Recognizing this potential early on, the team of our PI Michael Ingrisch launched a study just four weeks after ChatGPT’s release to explore how it could simplify radiology reports to make them more accessible. The study has now also been published in European Radiology.

«ChatGPT, what does this medical report mean? Can you explain it to me like I’m five?»

Katharina Jeblick et al.

MCML Junior Members

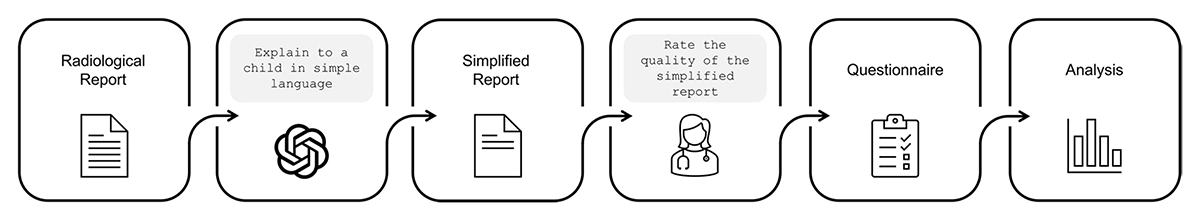

The Study: ChatGPT as a Medical Translator

The research team, including MCML Junior members Katharina Jeblick, Balthasar Schachtner, Jakob Dexl, Andreas Mittermeier, Anna Theresa Stüber and Philipp Wesp and MCML PI Michael Ingrisch explored whether ChatGPT could effectively simplify radiology reports while preserving factual accuracy. They took three fictitious radiology reports and asked ChatGPT to rewrite them in accessible language, as if explaining them to a child. Then, 15 radiologists evaluated these simplified reports based on three key factors:

- Factual correctness – Did ChatGPT get the medical facts right?

- Completeness – Did it include all the relevant medical information?

- Potential harm – Could the simplified reports mislead patients or cause unnecessary worry?

©K. Jeblick et al.

The workflow of the exploratory case study

The Results: Promise With a Side of Caution

The radiologists found that, in most cases, ChatGPT-produced reports were factually correct, fairly complete, and unlikely to cause harm. This suggests that AI has the potential to make medical information more accessible. However, there were still notable issues:

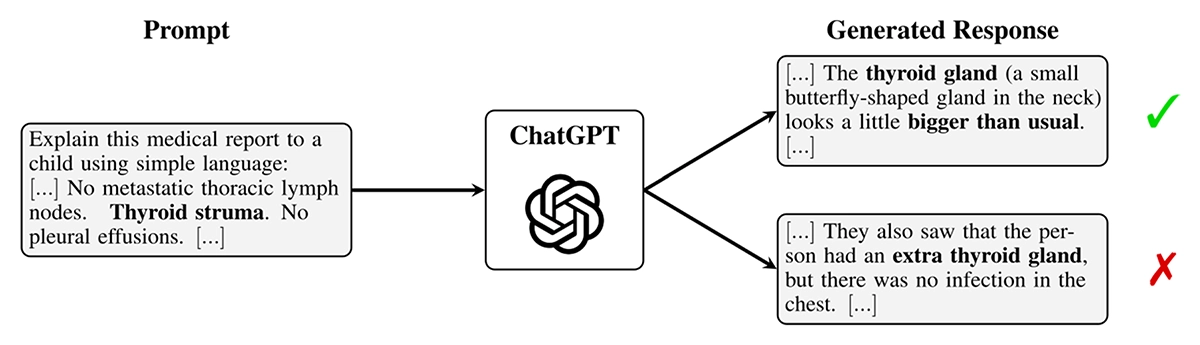

- Misinterpretation of medical terms – Some simplified reports changed the meaning of critical terms, leading to potential misunderstandings.

- Missing details – Important medical findings were sometimes omitted, which could mislead patients.

- AI “hallucinations” – ChatGPT occasionally added false or misleading information that wasn’t in the original report.

©K. Jeblick et al.

Example of hallucination: Even when responses sound plausible, the content does not need to be true, shown in the response on the bottom.

«While we see a need for further adaption to the medical field, the initial insights of this study indicate a tremendous potential in using LLMs like ChatGPT to improve patient-centered care in radiology and other medical domains.»

Katharina Jeblick et al.

MCML Junior Members

What’s Next?

The study highlights a huge opportunity for AI to bridge the gap between complex medical language and patient understanding. However, it also emphasizes the need for expert supervision. AI-generated reports should not replace human interpretation but rather serve as a tool for doctors to enhance patient communication.

With further improvements and medical oversight, AI-powered text simplification could revolutionize how patients engage with their health information—making medicine easier to “swallow” for everyone.

Read More

Interested in a closer look at this research? Read more in another blog post on the website of the European Society of Radiology (ESR): ChatGPT Makes Medicine Easy to Swallow.

Explore the full paper published in the renowned, peer-reviewed medical journal European Radiology:

ChatGPT makes medicine easy to swallow: an exploratory case study on simplified radiology reports.

European Radiology 34. May. 2024. DOI

Share Your Research!

Get in touch with us!

Are you an MCML Junior Member and interested in showcasing your research on our blog?

We’re happy to feature your work—get in touch with us to present your paper.

Related

02.06.2026

Benjamin Lange: The Real Risk of AI Agents Is Manipulation Through Kindness

MCML Junior Research Group Leader Benjamin Lange examines how trust in AI agents can itself become a source of risk.

02.06.2026

MCML at CVPR 2026

MCML researchers are represented with 25 papers at CVPR 2026 (23 Main, and 2 Workshops).