21.03.2025

©aiforgood

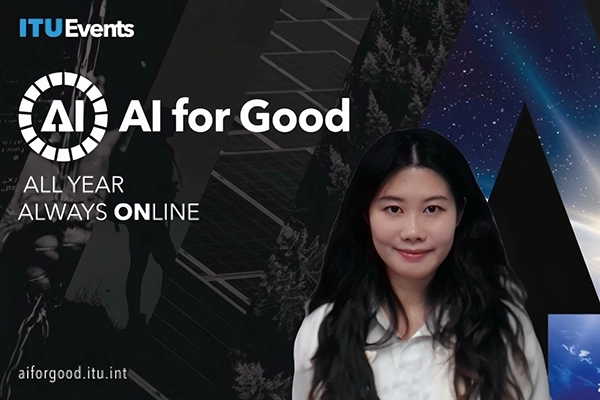

Explainable Multimodal Agents With Symbolic Representations & Can AI Be Less Biased?

Ruotong Liao at United Nations AI for Good

More than 170 audiences visited the online lecture of our Junior Member Ruotong Liao, PhD student in the group of our PI Volker Tresp, on Monday, 17. March 2025, as an invited speaker at the United Nations "AI for Good".

With her talk "Perceive, Remember, and Predict: Explainable Multimodal Agents with Symbolic Representations," Ruotong Liao took part in the online event "Explainable Multimodal Agents with Symbolic Representations & Can AI be less biased?"

At the event, which was hosted by the leading platform for artificial intelligence for sustainable development, Ruotong Liao explained her research results, focussed on how the integration of temporal reasoning and symbolic knowledge about evolving events enables LLMs to make structured, interpretable, and context-sensitive predictions. Ruotong Liao presented work aimed at developing explainable multimodal agents capable of perceiving, storing, predicting, and justifying their conclusions over time.

See the whole presentation in the stream.

Related

27.05.2026

Medical Diagnoses: How AI Explanations Help Doctors

Stefan Feuerriegel shows that AI models can improve diagnostic accuracy in radiology – but how the AI explains its recommendations is crucial.

26.05.2026

MCML Pitchtalks With Reply

MCML Industry Pitch Talks at Reply in Munich featured 3 pitches on Quantum ML satellite imagery and VLMs followed by an extended networking session